Time Series Classification Module Tutorial¶

I. Overview¶

Time series classification involves identifying and categorizing different patterns in time series data by analyzing trends, periodicity, seasonality, and other factors that vary over time. This technique is widely used in medical diagnosis and other fields, effectively classifying key information in time series data to provide robust support for decision-making.

II. Supported Model List¶

The inference time only includes the model inference time and does not include the time for pre- or post-processing.

| Model Name | Model Download Link | Acc(%) | Model Storage Size (MB) | Description |

|---|---|---|---|---|

| TimesNet_cls | Inference Model/Training Model | 87.5 | 0.792 | TimesNet is an adaptive and high-accuracy time series classification model through multi-period analysis |

Test Environment Description:

- Performance Test Environment

- Test Dataset: UWaveGestureLibrary.

- Hardware Configuration:

- GPU: NVIDIA Tesla T4

- CPU: Intel Xeon Gold 6271C @ 2.60GHz

- Software Environment:

- Ubuntu 20.04 / CUDA 11.8 / cuDNN 8.9 / TensorRT 8.6.1.6

- paddlepaddle 3.0.0 / paddlex 3.0.3

</li>

<li><b>Inference Mode Description</b></li>

| Mode | GPU Configuration | CPU Configuration | Acceleration Technology Combination |

|---|---|---|---|

| Normal Mode | FP32 Precision / No TRT Acceleration | FP32 Precision / 8 Threads | PaddleInference |

| High-Performance Mode | Optimal combination of pre-selected precision types and acceleration strategies | FP32 Precision / 8 Threads | Pre-selected optimal backend (Paddle/OpenVINO/TRT, etc.) |

III. Quick Integration¶

❗ Before quick integration, please install the PaddleX wheel package. For detailed instructions, refer to PaddleX Local Installation Guide

After installing the wheel package, you can perform inference for the time series classification module with just a few lines of code. You can switch models under this module freely, and you can also integrate the model inference of the time series classification module into your project. Before running the following code, please download the demo csv to your local machine.

from paddlex import create_model

model = create_model(model_name="TimesNet_cls")

output = model.predict("ts_cls.csv", batch_size=1)

for res in output:

res.print()

res.save_to_csv(save_path="./output/")

res.save_to_json(save_path="./output/res.json")

After running, the result obtained is:

{

"res": {

"input_path": "ts_cls.csv",

"classification": [

{

"classid": 0,

"score": 0.617687881

}

]

}

}

The meanings of the parameters in the running results are as follows:

- input_path: Indicates the path to the input time-series file for prediction.

- classification: Indicates the time-series classification result. classid represents the predicted category, and score represents the prediction confidence. You can save the prediction results to a CSV file using res.save_to_csv() or to a JSON file using res.save_to_json().

Descriptions of related methods, parameters, etc., are as follows:

- The

create_modelmethod instantiates a time-series classification model (usingTimesNet_clsas an example). Specific descriptions are as follows:

| Parameter | Description | Type | Options | Default Value |

|---|---|---|---|---|

model_name |

The name of the model | str |

All model names supported by PaddleX | None |

model_dir |

The storage path of the model | str |

None | None |

device |

The device used for model inference | str |

It supports specifying specific GPU card numbers, such as "gpu:0", other hardware card numbers, such as "npu:0", or CPU, such as "cpu". | gpu:0 |

use_hpip |

Whether to enable the high-performance inference plugin | bool |

None | False |

hpi_config |

High-performance inference configuration | dict | None |

None | None |

-

The

model_namemust be specified. After specifyingmodel_name, PaddleX's built-in model parameters are used by default. Ifmodel_diris specified, the user-defined model is used. -

The

predict()method of the time-series classification model is called for inference prediction. The parameters of thepredict()method areinputandbatch_size, with specific descriptions as follows:

| Parameter | Description | Type | Options | Default Value |

|---|---|---|---|---|

input |

Data to be predicted, supporting multiple input types | Python Var/str/list |

|

None |

batch_size |

Batch size | int |

Any integer greater than 0 | 1 |

- The prediction results are processed for each sample, with the prediction result being of type

dict, and support operations such as printing, saving as acsvfile, and saving as ajsonfile:

| Method | Description | Parameter | Type | Explanation | Default Value |

|---|---|---|---|---|---|

print() |

Print the result to the terminal | format_json |

bool |

Whether to format the output content with JSON indentation |

True |

indent |

int |

Specify the indentation level to beautify the output JSON data, making it more readable. Only effective when format_json is True |

4 | ||

ensure_ascii |

bool |

Control whether non-ASCII characters are escaped to Unicode. When set to True, all non-ASCII characters will be escaped; False retains the original characters. Only effective when format_json is True |

False |

||

save_to_json() |

Save the result as a json file |

save_path |

str |

The file path for saving. When a directory is provided, the saved file name matches the input file name | None |

indent |

int |

Specify the indentation level to beautify the output JSON data, making it more readable. Only effective when format_json is True |

4 | ||

ensure_ascii |

bool |

Control whether non-ASCII characters are escaped to Unicode. When set to True, all non-ASCII characters will be escaped; False retains the original characters. Only effective when format_json is True |

False |

||

save_to_csv() |

Save the result as a time-series csv file |

save_path |

str |

The file path for saving. When a directory is provided, the saved file name matches the input file name | None |

- Additionally, it also supports obtaining the visualized time-series with results and the prediction results via attributes, as follows:

| Attribute | Description |

|---|---|

json |

Get the prediction result in json format |

csv |

Get the time-series classification prediction result in csv format |

For more information on using PaddleX's single-model inference APIs, refer to PaddleX Single Model Python Script Usage Instructions.

IV. Custom Development¶

If you aim for higher accuracy with existing models, you can leverage PaddleX's custom development capabilities to develop better time series classification models. Before using PaddleX to develop time series classification models, ensure you have installed the PaddleTS plugin. Refer to the PaddleX Local Installation Guide for the installation process.

4.1 Data Preparation¶

Before model training, you need to prepare the dataset for the corresponding task module. PaddleX provides data validation functionality for each module, and only data that passes validation can be used for model training. Additionally, PaddleX provides demo datasets for each module, which you can use to complete subsequent development. If you wish to use private datasets for subsequent model training, refer to PaddleX Time Series Classification Task Module Data Annotation Tutorial.

4.1.1 Demo Data Download¶

You can use the following commands to download the demo dataset to a specified folder:

wget https://paddle-model-ecology.bj.bcebos.com/paddlex/data/ts_classify_examples.tar -P ./dataset

tar -xf ./dataset/ts_classify_examples.tar -C ./dataset/

4.1.2 Data Validation¶

You can complete data validation with a single command:

python main.py -c paddlex/configs/modules/ts_classification/TimesNet_cls.yaml \

-o Global.mode=check_dataset \

-o Global.dataset_dir=./dataset/ts_classify_examples

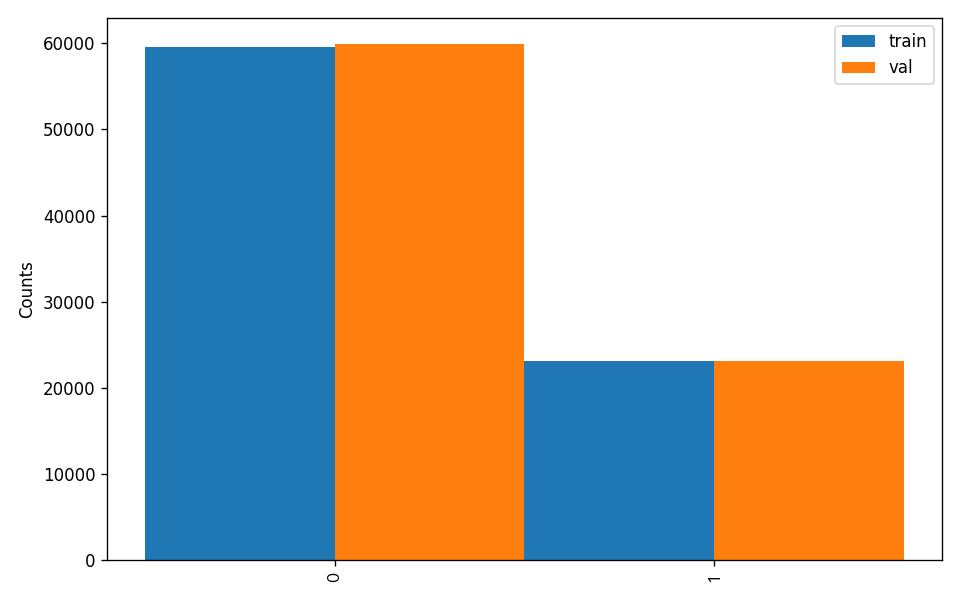

Check dataset passed ! in the log if the command runs successfully. The validation result file is saved in ./output/check_dataset_result.json, and related outputs are saved in the current directory's ./output/check_dataset directory, including example time series data and class distribution histograms.

👉 Validation Result Details (Click to Expand)

The specific content of the validation result file is:

{

"done_flag": true,

"check_pass": true,

"attributes": {

"train_samples": 82620,

"train_table": [

[

"Unnamed: 0",

"group_id",

"dim_0",

...,

"dim_60",

"label",

"time"

],

[

0.0,

0.0,

0.000949,

...,

0.12107,

1.0,

0.0

]

],

"val_samples": 83025,

"val_table": [

[

"Unnamed: 0",

"group_id",

"dim_0",

...,

"dim_60",

"label",

"time"

],

[

0.0,

0.0,

0.004578,

...,

0.15728,

1.0,

0.0

]

]

},

"analysis": {

"histogram": "check_dataset/histogram.png"

},

"dataset_path": "./dataset/ts_classify_examples",

"show_type": "csv",

"dataset_type": "TSCLSDataset"

}

The verification results above indicate that check_pass being True means the dataset format meets the requirements. Explanations for other indicators are as follows:

attributes.train_samples: The number of training samples in this dataset is 12194;attributes.val_samples: The number of validation samples in this dataset is 3484;attributes.train_sample_paths: A list of relative paths to the top 10 rows of training samples in this dataset;attributes.val_sample_paths: A list of relative paths to the top 10 rows of validation samples in this dataset;

Furthermore, the dataset validation also involved an analysis of the distribution of sample numbers across all categories within the dataset, and a distribution histogram (histogram.png) was generated accordingly.

Note: Only data that has passed validation can be used for training and evaluation.

4.1.3 Dataset Format Conversion/Dataset Splitting (Optional)¶

After completing data validation, you can convert the dataset format and re-split the training/validation ratio by modifying the configuration file or appending hyperparameters.

👉 Details on Format Conversion/Dataset Splitting (Click to Expand)

(1) Dataset Format Conversion

Time-series classification supports converting xlsx and xls format datasets to csv format.

Parameters related to dataset validation can be set by modifying the fields under CheckDataset in the configuration file. Examples of some parameters in the configuration file are as follows:

CheckDataset:convert:enable: Whether to perform dataset format conversion, supporting conversion fromxlsxandxlsformats toCSVformat, default isFalse;src_dataset_type: If dataset format conversion is performed, the source dataset format does not need to be set, default isnull;

To enable format conversion, modify the configuration as follows:

......

CheckDataset:

......

convert:

enable: True

src_dataset_type: null

......

Then execute the command:

python main.py -c paddlex/configs/modules/ts_classification/TimesNet_cls.yaml \

-o Global.mode=check_dataset \

-o Global.dataset_dir=./dataset/ts_classify_examples

The above parameters can also be set by appending command line arguments:

python main.py -c paddlex/configs/modules/ts_classification/TimesNet_cls.yaml \

-o Global.mode=check_dataset \

-o Global.dataset_dir=./dataset/ts_classify_examples \

-o CheckDataset.convert.enable=True

(2) Dataset Splitting

Parameters related to dataset validation can be set by modifying the fields under CheckDataset in the configuration file. Examples of some parameters in the configuration file are as follows:

CheckDataset:convert:enable: Whether to perform dataset format conversion,Trueto enable, default isFalse;src_dataset_type: If dataset format conversion is performed, time-series classification only supports converting xlsx annotation files to csv, the source dataset format does not need to be set, default isnull;split:enable: Whether to re-split the dataset,Trueto enable, default isFalse;train_percent: If the dataset is re-split, the percentage of the training set needs to be set, an integer between 0-100, ensuring the sum withval_percentis 100;val_percent: If the dataset is re-split, the percentage of the validation set needs to be set, an integer between 0-100, ensuring the sum withtrain_percentis 100;

For example, if you want to re-split the dataset with a 90% training set and a 10% validation set, modify the configuration file as follows:

......

CheckDataset:

......

split:

enable: True

train_percent: 90

val_percent: 10

......

Then execute the command:

python main.py -c paddlex/configs/modules/ts_classification/TimesNet_cls.yaml \

-o Global.mode=check_dataset \

-o Global.dataset_dir=./dataset/ts_classify_examples

After dataset splitting, the original annotation files will be renamed to xxx.bak in the original path.

The above parameters can also be set by appending command line arguments:

python main.py -c paddlex/configs/modules/ts_classification/TimesNet_cls.yaml \

-o Global.mode=check_dataset \

-o Global.dataset_dir=./dataset/ts_classify_examples \

-o CheckDataset.split.enable=True \

-o CheckDataset.split.train_percent=90 \

-o CheckDataset.split.val_percent=10

4.2 Model Training¶

Model training can be completed with just one command. Here, we use the Time Series Forecasting model (TimesNet_cls) as an example:

python main.py -c paddlex/configs/modules/ts_classification/TimesNet_cls.yaml \

-o Global.mode=train \

-o Global.dataset_dir=./dataset/ts_classify_examples

You need to follow these steps:

- Specify the

.yamlconfiguration file path for the model (here it'sTimesNet_cls.yaml,When training other models, you need to specify the corresponding configuration files. The relationship between the model and configuration files can be found in the PaddleX Model List (CPU/GPU)). - Set the mode to model training:

-o Global.mode=train - Specify the training dataset path:

-o Global.dataset_dir - Other related parameters can be set by modifying the

GlobalandTrainfields in the.yamlconfiguration file, or adjusted by appending parameters in the command line. For example, to train using the first two GPUs:-o Global.device=gpu:0,1; to set the number of training epochs to 10:-o Train.epochs_iters=10. For more modifiable parameters and their detailed explanations, refer to the PaddleX TS Configuration Parameters Documentation. - New Feature: Paddle 3.0 support CINN (Compiler Infrastructure for Neural Networks) to accelerate training speed when using GPU device. Please specify

-o Train.dy2st=Trueto enable it.

👉 More Details (Click to Expand)

- During model training, PaddleX automatically saves model weight files, with the default path being

output. To specify a different save path, use the-o Global.outputfield in the configuration file. - PaddleX abstracts the concepts of dynamic graph weights and static graph weights from you. During model training, both dynamic and static graph weights are produced, and static graph weights are used by default for model inference.

-

After model training, all outputs are saved in the specified output directory (default is

./output/), typically including: -

train_result.json: Training result record file, including whether the training task completed successfully, produced weight metrics, and related file paths. train.log: Training log file, recording model metric changes, loss changes, etc.config.yaml: Training configuration file, recording the hyperparameters used for this training session.best_accuracy.pdparams.tar,scaler.pkl,.checkpoints,.inference: Model weight-related files, including Model weight-related files, including network parameters, optimizers, and network architecture.

4.3 Model Evaluation¶

After completing model training, you can evaluate the specified model weights file on the validation set to verify the model's accuracy. Using PaddleX for model evaluation can be done with a single command:

python main.py -c paddlex/configs/modules/ts_classification/TimesNet_cls.yaml \

-o Global.mode=evaluate \

-o Global.dataset_dir=./dataset/ts_classify_examples

- Specify the path to the model's

.yamlconfiguration file (here it'sTimesNet_cls.yaml) - Specify the mode as model evaluation:

-o Global.mode=evaluate - Specify the path to the validation dataset:

-o Global.dataset_dirOther relevant parameters can be set by modifying theGlobalandEvaluatefields in the.yamlconfiguration file. For details, refer to PaddleX Time Series Task Model Configuration File Parameter Description.

👉 More Details (Click to Expand)

When evaluating the model, you need to specify the model weights file path. Each configuration file has a default weight save path built-in. If you need to change it, simply set it by appending a command line parameter, such as -o Evaluate.weight_path=./output/best_model/model.pdparams.

After completing the model evaluation, typically, the following outputs are generated:

Upon completion of model evaluation, an evaluate_result.json file is produced, which records the evaluation results, specifically whether the evaluation task was completed successfully and the model's evaluation metrics, including Top-1 Accuracy and F1 score.

4.4 Model Inference and Model Integration¶

After completing model training and evaluation, you can use the trained model weights for inference prediction or Python integration.

4.4.1 Model Inference¶

To perform inference prediction via the command line, simply use the following command:

Before running the following code, please download the demo csv to your local machine.

python main.py -c paddlex/configs/modules/ts_classification/TimesNet_cls.yaml \

-o Global.mode=predict \

-o Predict.model_dir="./output/inference" \

-o Predict.input="ts_cls.csv"

- Specify the path to the model's

.yamlconfiguration file (here it'sTimesNet_cls.yaml- Note: This should likely beTimesNet_cls.yamlfor consistency) - Specify the mode as model inference prediction:

-o Global.mode=predict - Specify the model weights path:

-o Predict.model_dir="./output/inference" - Specify the input data path:

-o Predict.input="..."Other relevant parameters can be set by modifying theGlobalandPredictfields in the.yamlconfiguration file. For details, refer to PaddleX Time Series Task Model Configuration File Parameter Description.

4.4.2 Model Integration¶

Models can be directly integrated into the PaddleX pipeline or directly into your own projects.

- Pipeline Integration

The time series prediction module can be integrated into PaddleX pipelines such as Time Series Classification. Simply replace the model path to update the time series prediction model. In pipeline integration, you can use service deployment to deploy your trained model.

- Module Integration

The weights you produce can be directly integrated into the time series classification module. Refer to the Python example code in Quick Integration, simply replace the model with the path to your trained model.

You can also use the PaddleX high-performance inference plugin to optimize the inference process of your model and further improve efficiency. For detailed procedures, please refer to the PaddleX High-Performance Inference Guide.