Object Detection Module Tutorial¶

I. Overview¶

The object detection module is a crucial component in computer vision systems, responsible for locating and marking regions containing specific objects in images or videos. The performance of this module directly impacts the accuracy and efficiency of the entire computer vision system. The object detection module typically outputs bounding boxes for the target regions, which are then passed as input to the object recognition module for further processing.

II. List of Supported Models¶

The inference time only includes the model inference time and does not include the time for pre- or post-processing.

| Model | Model Download Link | mAP(%) | GPU Inference Time (ms) [Normal Mode / High-Performance Mode] |

CPU Inference Time (ms) [Normal Mode / High-Performance Mode] |

Model Storage Size (MB) | Description |

|---|---|---|---|---|---|---|

| PicoDet-L | Inference Model/Training Model | 42.6 | 14.31 / 11.06 | 45.95 / 25.06 | 20.9 | PP-PicoDet is a lightweight object detection algorithm for full-size, wide-angle targets, considering the computational capacity of mobile devices. Compared to traditional object detection algorithms, PP-PicoDet has a smaller model size and lower computational complexity, achieving higher speed and lower latency while maintaining detection accuracy. |

| PicoDet-S | Inference Model/Training Model | 29.1 | 9.15 / 3.26 | 16.06 / 4.04 | 4.4 | |

| PP-YOLOE_plus-L | Inference Model/Training Model | 52.9 | 32.06 / 28.00 | 185.32 / 116.21 | 185.3 | PP-YOLOE_plus is an upgraded version of the high-precision cloud-edge integrated model PP-YOLOE, developed by Baidu's PaddlePaddle vision team. By using the large-scale Objects365 dataset and optimizing preprocessing, it significantly enhances the model's end-to-end inference speed. |

| PP-YOLOE_plus-S | Inference Model/Training Model | 43.7 | 11.43 / 7.52 | 60.16 / 26.94 | 28.3 | |

| RT-DETR-H | Inference Model/Training Model | 56.3 | 114.57 / 101.56 | 938.20 / 938.20 | 435.8 | RT-DETR is the first real-time end-to-end object detector. The model features an efficient hybrid encoder to meet both model performance and throughput requirements, efficiently handling multi-scale features, and proposes an accelerated and optimized query selection mechanism to optimize the dynamics of decoder queries. RT-DETR supports flexible end-to-end inference speeds by using different decoders. |

| RT-DETR-L | Inference Model/Training Model | 53.0 | 34.76 / 27.60 | 495.39 / 247.68 | 113.7 |

❗ The above list features the 6 core models that the image classification module primarily supports. In total, this module supports 37 models. The complete list of models is as follows:

👉Details of Model List

| Model | Model Download Link | mAP(%) | GPU Inference Time (ms) [Normal Mode / High-Performance Mode] |

CPU Inference Time (ms) [Normal Mode / High-Performance Mode] |

Model Storage Size (MB) | Description |

|---|---|---|---|---|---|---|

| Cascade-FasterRCNN-ResNet50-FPN | Inference Model/Training Model | 41.1 | 120.28 / 120.28 | - / 6514.61 | 245.4 | Cascade-FasterRCNN is an improved version of the Faster R-CNN object detection model. By coupling multiple detectors and optimizing detection results using different IoU thresholds, it addresses the mismatch problem between training and prediction stages, enhancing the accuracy of object detection. |

| Cascade-FasterRCNN-ResNet50-vd-SSLDv2-FPN | Inference Model/Training Model | 45.0 | 124.10 / 124.10 | - / 6709.52 | 246.2 | |

| CenterNet-DLA-34 | Inference Model/Training Model | 37.6 | 67.19 / 67.19 | 6622.61 / 6622.61 | 75.4 | CenterNet is an anchor-free object detection model that treats the keypoints of the object to be detected as a single point—the center point of its bounding box, and performs regression through these keypoints. |

| CenterNet-ResNet50 | Inference Model/Training Model | 38.9 | 216.06 / 216.06 | 2545.79 / 2545.79 | 319.7 | |

| DETR-R50 | Inference Model/Training Model | 42.3 | 58.80 / 26.90 | 370.96 / 208.77 | 159.3 | DETR is a transformer-based object detection model proposed by Facebook. It achieves end-to-end object detection without the need for predefined anchor boxes or NMS post-processing strategies. |

| FasterRCNN-ResNet34-FPN | Inference Model/Training Model | 37.8 | 76.90 / 76.90 | - / 4136.79 | 137.5 | Faster R-CNN is a typical two-stage object detection model that first generates region proposals and then performs classification and regression on these proposals. Compared to its predecessors R-CNN and Fast R-CNN, Faster R-CNN's main improvement lies in the region proposal aspect, using a Region Proposal Network (RPN) to provide region proposals instead of traditional selective search. RPN is a Convolutional Neural Network (CNN) that shares convolutional features with the detection network, reducing the computational overhead of region proposals. |

| FasterRCNN-ResNet50-FPN | Inference Model/Training Model | 38.4 | 95.48 / 95.48 | - / 3693.90 | 148.1 | |

| FasterRCNN-ResNet50-vd-FPN | Inference Model/Training Model | 39.5 | 98.03 / 98.03 | - / 4278.36 | 148.1 | |

| FasterRCNN-ResNet50-vd-SSLDv2-FPN | Inference Model/Training Model | 41.4 | 99.23 / 99.23 | - / 4415.68 | 148.1 | |

| FasterRCNN-ResNet50 | Inference Model/Training Model | 36.7 | 129.10 / 129.10 | - / 3868.44 | 120.2 | |

| FasterRCNN-ResNet101-FPN | Inference Model/Training Model | 41.4 | 131.48 / 131.48 | - / 4380.00 | 216.3 | |

| FasterRCNN-ResNet101 | Inference Model/Training Model | 39.0 | 216.71 / 216.71 | - / 5376.45 | 188.1 | |

| FasterRCNN-ResNeXt101-vd-FPN | Inference Model/Training Model | 43.4 | 234.38 / 234.38 | - / 6154.61 | 360.6 | |

| FasterRCNN-Swin-Tiny-FPN | Inference Model/Training Model | 42.6 | 65.92 / 65.92 | - / 2468.98 | 159.8 | |

| FCOS-ResNet50 | Inference Model/Training Model | 39.6 | 101.02 / 34.42 | 752.15 / 752.15 | 124.2 | FCOS is an anchor-free object detection model that performs dense predictions. It uses the backbone of RetinaNet and directly regresses the width and height of the target object on the feature map, predicting the object's category and centerness (the degree of offset of pixels on the feature map from the object's center), which is eventually used as a weight to adjust the object score. |

| PicoDet-L | Inference Model/Training Model | 42.6 | 14.31 / 11.06 | 45.95 / 25.06 | 20.9 | PP-PicoDet is a lightweight object detection algorithm designed for full-size and wide-aspect-ratio targets, with a focus on mobile device computation. Compared to traditional object detection algorithms, PP-PicoDet boasts smaller model sizes and lower computational complexity, achieving higher speeds and lower latency while maintaining detection accuracy. |

| PicoDet-M | Inference Model/Training Model | 37.5 | 10.48 / 5.00 | 22.88 / 9.03 | 16.8 | |

| PicoDet-S | Inference Model/Training Model | 29.1 | 9.15 / 3.26 | 16.06 / 4.04 | 4.4 | |

| PicoDet-XS | Inference Model/Training Model | 26.2 | 9.54 / 3.52 | 17.96 / 5.38 | 5.7 | |

| PP-YOLOE_plus-L | Inference Model/Training Model | 52.9 | 32.06 / 28.00 | 185.32 / 116.21 | 185.3 | PP-YOLOE_plus is an iteratively optimized and upgraded version of PP-YOLOE, a high-precision cloud-edge integrated model developed by Baidu PaddlePaddle's Vision Team. By leveraging the large-scale Objects365 dataset and optimizing preprocessing, it significantly enhances the end-to-end inference speed of the model. |

| PP-YOLOE_plus-M | Inference Model/Training Model | 49.8 | 18.37 / 15.04 | 108.77 / 63.48 | 82.3 | |

| PP-YOLOE_plus-S | Inference Model/Training Model | 43.7 | 11.43 / 7.52 | 60.16 / 26.94 | 28.3 | |

| PP-YOLOE_plus-X | Inference Model/Training Model | 54.7 | 56.28 / 50.60 | 292.08 / 212.24 | 349.4 | |

| RT-DETR-H | Inference Model/Training Model | 56.3 | 114.57 / 101.56 | 938.20 / 938.20 | 435.8 | RT-DETR is the first real-time end-to-end object detector. It features an efficient hybrid encoder that balances model performance and throughput, efficiently processes multi-scale features, and introduces an accelerated and optimized query selection mechanism to dynamize decoder queries. RT-DETR supports flexible end-to-end inference speeds through the use of different decoders. |

| RT-DETR-L | Inference Model/Training Model | 53.0 | 34.76 / 27.60 | 495.39 / 247.68 | 113.7 | |

| RT-DETR-R18 | Inference Model/Training Model | 46.5 | 19.11 / 14.82 | 263.13 / 143.05 | 70.7 | |

| RT-DETR-R50 | Inference Model/Training Model | 53.1 | 41.11 / 10.12 | 536.20 / 482.86 | 149.1 | |

| RT-DETR-X | Inference Model/Training Model | 54.8 | 61.91 / 51.41 | 639.79 / 639.79 | 232.9 | |

| YOLOv3-DarkNet53 | Inference Model/Training Model | 39.1 | 39.62 / 35.54 | 166.57 / 136.34 | 219.7 | YOLOv3 is a real-time end-to-end object detector that utilizes a unique single Convolutional Neural Network (CNN) to frame the object detection problem as a regression task, enabling real-time detection. The model employs multi-scale detection to enhance performance across different object sizes. |

| YOLOv3-MobileNetV3 | Inference Model/Training Model | 31.4 | 16.54 / 6.21 | 64.37 / 45.55 | 83.8 | |

| YOLOv3-ResNet50_vd_DCN | Inference Model/Training Model | 40.6 | 31.64 / 26.72 | 226.75 / 226.75 | 163.0 | |

| YOLOX-L | Inference Model/Training Model | 50.1 | 49.68 / 45.03 | 232.52 / 156.24 | 192.5 | Building upon YOLOv3's framework, YOLOX significantly boosts detection performance in complex scenarios by incorporating Decoupled Head, Data Augmentation, Anchor Free, and SimOTA components. |

| YOLOX-M | Inference Model/Training Model | 46.9 | 43.46 / 29.52 | 147.64 / 80.06 | 90.0 | |

| YOLOX-N | Inference Model/Training Model | 26.1 | 42.94 / 17.79 | 64.15 / 7.19 | 3.4 | |

| YOLOX-S | Inference Model/Training Model | 40.4 | 46.53 / 29.34 | 98.37 / 35.02 | 32.0 | |

| YOLOX-T | Inference Model/Training Model | 32.9 | 31.81 / 18.91 | 55.34 / 11.63 | 18.1 | |

| YOLOX-X | Inference Model/Training Model | 51.8 | 84.06 / 77.28 | 390.38 / 272.88 | 351.5 | |

| Co-Deformable-DETR-R50 | Inference Model/Training Model | 49.7 | 259.62 / 259.62 | 32413.76 / 32413.76 | 184 | Co-DETR is an advanced end-to-end object detector. It is based on the DETR architecture and significantly enhances detection performance and training efficiency by introducing a collaborative hybrid assignment training strategy that combines traditional one-to-many label assignments with one-to-one matching in object detection tasks. |

| Co-Deformable-DETR-Swin-T | Inference Model/Training Model | 48.0(@640x640 input shape) | 120.17 / 120.17 | - / 15620.29 | 187 | |

| Co-DINO-R50 | Inference Model/Training Model | 52.0 | 1123.23 / 1123.23 | - / - | 186 | |

| Co-DINO-Swin-L | Inference Model/Training Model | 55.9 (@640x640 input shape) | - / - | - / - | 840 |

- Performance Test Environment

- Test Dataset: COCO2017 validation set.

- Hardware Configuration:

- GPU: NVIDIA Tesla T4

- CPU: Intel Xeon Gold 6271C @ 2.60GHz

- Software Environment:

- Ubuntu 20.04 / CUDA 11.8 / cuDNN 8.9 / TensorRT 8.6.1.6

- paddlepaddle 3.0.0 / paddlex 3.0.3

| Mode | GPU Configuration | CPU Configuration | Acceleration Technology Combination |

|---|---|---|---|

| Normal Mode | FP32 Precision / No TRT Acceleration | FP32 Precision / 8 Threads | PaddleInference |

| High-Performance Mode | Optimal combination of pre-selected precision types and acceleration strategies | FP32 Precision / 8 Threads | Pre-selected optimal backend (Paddle/OpenVINO/TRT, etc.) |

III. Quick Integration¶

❗ Before proceeding with quick integration, please install the PaddleX wheel package. For detailed instructions, refer to the PaddleX Local Installation Guide.

After installing the wheel package, you can perform object detection inference with just a few lines of code. You can easily switch between models within the module and integrate the object detection inference into your projects. Before running the following code, please download the demo image to your local machine.

from paddlex import create_model

model = create_model("PicoDet-S")

output = model.predict("general_object_detection_002.png", batch_size=1)

for res in output:

res.print(json_format=False)

res.save_to_img("./output/")

res.save_to_json("./output/res.json")

👉 The result obtained after running is: (Click to expand)

{'res': {'input_path': 'general_object_detection_002.png', 'boxes': [{'cls_id': 49, 'label': 'orange', 'score': 0.8188614249229431, 'coordinate': [661.351806640625, 93.0582275390625, 870.759033203125, 305.9371337890625]}, {'cls_id': 47, 'label': 'apple', 'score': 0.7745078206062317, 'coordinate': [76.80911254882812, 274.7490539550781, 330.5422058105469, 520.0427856445312]}, {'cls_id': 47, 'label': 'apple', 'score': 0.7271787524223328, 'coordinate': [285.3264465332031, 94.31749725341797, 469.7364501953125, 297.4034423828125]}, {'cls_id': 46, 'label': 'banana', 'score': 0.5576589703559875, 'coordinate': [310.8041076660156, 361.4362487792969, 685.1868896484375, 712.591552734375]}, {'cls_id': 47, 'label': 'apple', 'score': 0.5490103363990784, 'coordinate': [764.6251831054688, 285.7609558105469, 924.8153076171875, 440.9289245605469]}, {'cls_id': 47, 'label': 'apple', 'score': 0.515821635723114, 'coordinate': [853.9830932617188, 169.4142303466797, 987.802978515625, 303.5861511230469]}, {'cls_id': 60, 'label': 'dining table', 'score': 0.514293372631073, 'coordinate': [0.5308971405029297, 0.32445716857910156, 1072.953369140625, 720]}, {'cls_id': 47, 'label': 'apple', 'score': 0.510750949382782, 'coordinate': [57.36802673339844, 23.455347061157227, 213.39601135253906, 176.45611572265625]}]}}

The visualization image is as follows:

Note: Due to network issues, the above URL may not be accessible. If you need to access this link, please check the validity of the URL and try again. If the problem persists, it may be related to the link itself or the network connection.

Related methods, parameters, and explanations are as follows:

create_modelinstantiates an object detection model (here,PicoDet-Sis used as an example), and the specific explanations are as follows:

| Parameter | Parameter Description | Parameter Type | Options | Default Value |

|---|---|---|---|---|

model_name |

Name of the model | str |

None | None |

model_dir |

Path to store the model | str |

None | None |

device |

The device used for model inference | str |

It supports specifying specific GPU card numbers, such as "gpu:0", other hardware card numbers, such as "npu:0", or CPU, such as "cpu". | gpu:0 |

img_size |

Size of the input image; if not specified, the default configuration of the PaddleX official model will be used | int/list |

|

None |

threshold |

Threshold for filtering low-confidence prediction results; if not specified, the default configuration of the PaddleX official model will be used | float |

None | None |

use_hpip |

Whether to enable the high-performance inference plugin | bool |

None | False |

hpi_config |

High-performance inference configuration | dict | None |

None | None |

-

The

model_namemust be specified. After specifyingmodel_name, the default model parameters built into PaddleX are used. Ifmodel_diris specified, the user-defined model is used. -

The

predict()method of the object detection model is called for inference prediction. Thepredict()method has parametersinput,batch_size, andthreshold, which are explained as follows:

| Parameter | Parameter Description | Parameter Type | Options | Default Value |

|---|---|---|---|---|

input |

Data to be predicted, supporting multiple input types | Python Var/str/dict/list |

|

None |

batch_size |

Batch size | int |

Any integer | 1 |

threshold |

Threshold for filtering low-confidence prediction results; if not specified, the threshold parameter specified in create_model will be used. If create_model also does not specify it, the default configuration of the PaddleX official model will be used |

float |

None | None |

- The prediction results are processed, and the prediction result for each sample is of type

dict. It supports operations such as printing, saving as an image, and saving as ajsonfile:

| Method | Method Description | Parameter | Parameter Type | Parameter Description | Default Value |

|---|---|---|---|---|---|

print() |

Print the results to the terminal | format_json |

bool |

Whether to format the output content using JSON indentation |

True |

indent |

int |

Specify the indentation level to beautify the output JSON data, making it more readable, only effective when format_json is True |

4 | ||

ensure_ascii |

bool |

Control whether to escape non-ASCII characters to Unicode. If set to True, all non-ASCII characters will be escaped; False retains the original characters, only effective when format_json is True |

False |

||

save_to_json() |

Save the results as a JSON file | save_path |

str |

The path to save the file. If it is a directory, the saved file name will be consistent with the input file name | None |

indent |

int |

Specify the indentation level to beautify the output JSON data, making it more readable, only effective when format_json is True |

4 | ||

ensure_ascii |

bool |

Control whether to escape non-ASCII characters to Unicode. If set to True, all non-ASCII characters will be escaped; False retains the original characters, only effective when format_json is True |

False |

||

save_to_img() |

Save the results as an image file | save_path |

str |

The path to save the file. If it is a directory, the saved file name will be consistent with the input file name | None |

- Additionally, it supports obtaining the visualization image with results and the prediction results through attributes, as follows:

| Attribute | Attribute Description |

|---|---|

json |

Get the prediction result in json format |

img |

Get the visualization image in dict format |

For more information on using PaddleX's single-model inference APIs, refer to the PaddleX Single Model Python Script Usage Instructions.

IV. Custom Development¶

If you seek higher precision from existing models, you can leverage PaddleX's custom development capabilities to develop better object detection models. Before developing object detection models with PaddleX, ensure you have installed the object detection related training plugins. For installation instructions, refer to the PaddleX Local Installation Guide.

4.1 Data Preparation¶

Before model training, prepare the corresponding dataset for the task module. PaddleX provides a data validation feature for each module, and only datasets that pass validation can be used for model training. Additionally, PaddleX provides demo datasets for each module, which you can use to complete subsequent development. If you wish to use a private dataset for model training, refer to the PaddleX Object Detection Task Module Data Annotation Guide.

4.1.1 Download Demo Data¶

You can download the demo dataset to a specified folder using the following command:

wget https://paddle-model-ecology.bj.bcebos.com/paddlex/data/det_coco_examples.tar -P ./dataset

tar -xf ./dataset/det_coco_examples.tar -C ./dataset/

4.1.2 Data Validation¶

Validate your dataset with a single command:

python main.py -c paddlex/configs/modules/object_detection/PicoDet-S.yaml \

-o Global.mode=check_dataset \

-o Global.dataset_dir=./dataset/det_coco_examples

After executing the above command, PaddleX will validate the dataset and summarize its basic information. If the command runs successfully, it will print Check dataset passed ! in the log. The validation results file is saved in ./output/check_dataset_result.json, and related outputs are saved in the ./output/check_dataset directory in the current directory, including visual examples of sample images and sample distribution histograms.

👉 Details of Validation Results (Click to Expand)

The specific content of the validation result file is:

{

"done_flag": true,

"check_pass": true,

"attributes": {

"num_classes": 4,

"train_samples": 701,

"train_sample_paths": [

"check_dataset/demo_img/road839.png",

"check_dataset/demo_img/road363.png",

"check_dataset/demo_img/road148.png",

"check_dataset/demo_img/road237.png",

"check_dataset/demo_img/road733.png",

"check_dataset/demo_img/road861.png",

"check_dataset/demo_img/road762.png",

"check_dataset/demo_img/road515.png",

"check_dataset/demo_img/road754.png",

"check_dataset/demo_img/road173.png"

],

"val_samples": 176,

"val_sample_paths": [

"check_dataset/demo_img/road218.png",

"check_dataset/demo_img/road681.png",

"check_dataset/demo_img/road138.png",

"check_dataset/demo_img/road544.png",

"check_dataset/demo_img/road596.png",

"check_dataset/demo_img/road857.png",

"check_dataset/demo_img/road203.png",

"check_dataset/demo_img/road589.png",

"check_dataset/demo_img/road655.png",

"check_dataset/demo_img/road245.png"

]

},

"analysis": {

"histogram": "check_dataset/histogram.png"

},

"dataset_path": "det_coco_examples",

"show_type": "image",

"dataset_type": "COCODetDataset"

}

In the above validation results, check_pass being True indicates that the dataset format meets the requirements. Explanations for other indicators are as follows:

attributes.num_classes: The number of classes in this dataset is 4;attributes.train_samples: The number of training samples in this dataset is 704;attributes.val_samples: The number of validation samples in this dataset is 176;attributes.train_sample_paths: A list of relative paths to the visualization images of training samples in this dataset;attributes.val_sample_paths: A list of relative paths to the visualization images of validation samples in this dataset;

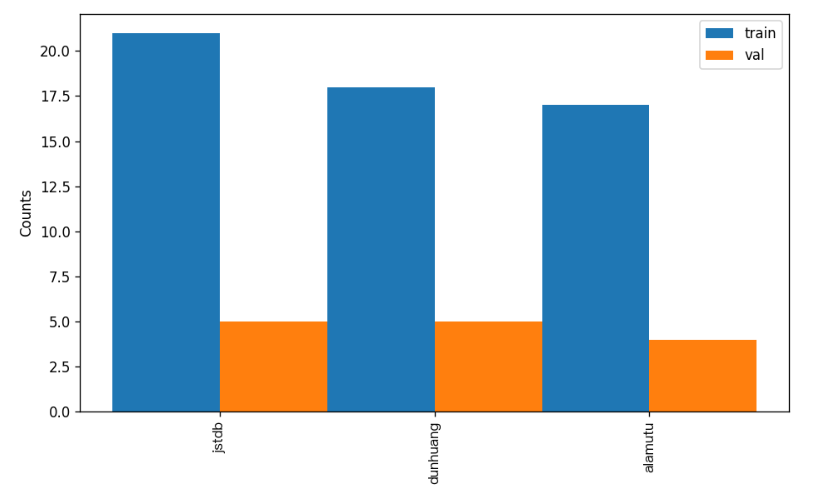

Additionally, the dataset verification also analyzes the distribution of sample numbers across all classes in the dataset and generates a histogram (histogram.png) for visualization:

4.1.3 Dataset Format Conversion / Dataset Splitting (Optional)¶

After completing data validation, you can convert the dataset format and re-split the training/validation ratio by modifying the configuration file or appending hyperparameters.

👉 Details of Format Conversion / Dataset Splitting (Click to Expand)

(1) Dataset Format Conversion

Object detection supports converting datasets in VOC and LabelMe formats to COCO format.

Parameters related to dataset validation can be set by modifying the fields under CheckDataset in the configuration file. Examples of some parameters in the configuration file are as follows:

CheckDataset:convert:enable: Whether to perform dataset format conversion. Object detection supports convertingVOCandLabelMeformat datasets toCOCOformat. Default isFalse;src_dataset_type: If dataset format conversion is performed, the source dataset format needs to be set. Default isnull, with optional valuesVOC,LabelMe,VOCWithUnlabeled,LabelMeWithUnlabeled; For example, if you want to convert aLabelMeformat dataset toCOCOformat, taking the followingLabelMeformat dataset as an example, you need to modify the configuration as follows:

cd /path/to/paddlex

wget https://paddle-model-ecology.bj.bcebos.com/paddlex/data/det_labelme_examples.tar -P ./dataset

tar -xf ./dataset/det_labelme_examples.tar -C ./dataset/

......

CheckDataset:

......

convert:

enable: True

src_dataset_type: LabelMe

......

Then execute the command:

python main.py -c paddlex/configs/modules/object_detection/PicoDet-S.yaml \

-o Global.mode=check_dataset \

-o Global.dataset_dir=./dataset/det_labelme_examples

Of course, the above parameters also support being set by appending command line arguments. Taking a LabelMe format dataset as an example:

python main.py -c paddlex/configs/modules/object_detection/PicoDet-S.yaml \

-o Global.mode=check_dataset \

-o Global.dataset_dir=./dataset/det_labelme_examples \

-o CheckDataset.convert.enable=True \

-o CheckDataset.convert.src_dataset_type=LabelMe

(2) Dataset Splitting

Parameters for dataset splitting can be set by modifying the fields under CheckDataset in the configuration file. Examples of some parameters in the configuration file are as follows:

CheckDataset:split:enable: Whether to re-split the dataset. WhenTrue, dataset splitting is performed. Default isFalse;train_percent: If the dataset is re-split, the percentage of the training set needs to be set. The type is any integer between 0-100, and it needs to ensure that the sum withval_percentis 100;val_percent: If the dataset is re-split, the percentage of the validation set needs to be set. The type is any integer between 0-100, and it needs to ensure that the sum withtrain_percentis 100; For example, if you want to re-split the dataset with a 90% training set and a 10% validation set, you need to modify the configuration file as follows:

......

CheckDataset:

......

split:

enable: True

train_percent: 90

val_percent: 10

......

Then execute the command:

python main.py -c paddlex/configs/modules/object_detection/PicoDet-S.yaml \

-o Global.mode=check_dataset \

-o Global.dataset_dir=./dataset/det_coco_examples

After dataset splitting is executed, the original annotation files will be renamed to xxx.bak in the original path.

The above parameters also support being set by appending command line arguments:

python main.py -c paddlex/configs/modules/object_detection/PicoDet-S.yaml \

-o Global.mode=check_dataset \

-o Global.dataset_dir=./dataset/det_coco_examples \

-o CheckDataset.split.enable=True \

-o CheckDataset.split.train_percent=90 \

-o CheckDataset.split.val_percent=10

4.2 Model Training¶

Model training can be completed with a single command, taking the training of the object detection model PicoDet-S as an example:

python main.py -c paddlex/configs/modules/object_detection/PicoDet-S.yaml \

-o Global.mode=train \

-o Global.dataset_dir=./dataset/det_coco_examples

- Specify the

.yamlconfiguration file path for the model (here it isPicoDet-S.yaml,When training other models, you need to specify the corresponding configuration files. The relationship between the model and configuration files can be found in the PaddleX Model List (CPU/GPU)) - Set the mode to model training:

-o Global.mode=train - Specify the path to the training dataset:

-o Global.dataset_dir - Other related parameters can be set by modifying the

GlobalandTrainfields in the.yamlconfiguration file, or adjusted by appending parameters in the command line. For example, to specify training on the first two GPUs:-o Global.device=gpu:0,1; to set the number of training epochs to 10:-o Train.epochs_iters=10. For more modifiable parameters and their detailed explanations, refer to the configuration file instructions for the corresponding task module of the model PaddleX Common Configuration File Parameters. - New Feature: Paddle 3.0 support CINN (Compiler Infrastructure for Neural Networks) to accelerate training speed when using GPU device. Please specify

-o Train.dy2st=Trueto enable it.

👉 More Details (Click to Expand)

- During model training, PaddleX automatically saves the model weight files, with the default being

output. If you need to specify a save path, you can set it through the-o Global.outputfield in the configuration file. - PaddleX shields you from the concepts of dynamic graph weights and static graph weights. During model training, both dynamic and static graph weights are produced, and static graph weights are selected by default for model inference.

-

After completing the model training, all outputs are saved in the specified output directory (default is

./output/), typically including: -

train_result.json: Training result record file, recording whether the training task was completed normally, as well as the output weight metrics, related file paths, etc.; train.log: Training log file, recording changes in model metrics and loss during training;config.yaml: Training configuration file, recording the hyperparameter configuration for this training session;.pdparams,.pdema,.pdopt.pdstate,.pdiparams,.json: Model weight-related files, including network parameters, optimizer, EMA, static graph network parameters, static graph network structure, etc.;- Notice: Since Paddle 3.0.0, the format of storing static graph network structure has changed to json(the current

.jsonfile) from protobuf(the former.pdmodelfile) to be compatible with PIR and more flexible and scalable.

4.3 Model Evaluation¶

After completing model training, you can evaluate the specified model weights file on the validation set to verify the model's accuracy. Using PaddleX for model evaluation can be done with a single command:

python main.py -c paddlex/configs/modules/object_detection/PicoDet-S.yaml \

-o Global.mode=evaluate \

-o Global.dataset_dir=./dataset/det_coco_examples

- Specify the

.yamlconfiguration file path for the model (here it isPicoDet-S.yaml) - Specify the mode as model evaluation:

-o Global.mode=evaluate - Specify the path to the validation dataset:

-o Global.dataset_dir. Other related parameters can be set by modifying theGlobalandEvaluatefields in the.yamlconfiguration file. For details, refer to PaddleX Common Model Configuration File Parameter Description.

👉 More Details (Click to Expand)

When evaluating the model, you need to specify the model weights file path. Each configuration file has a default weight save path built-in. If you need to change it, simply set it by appending a command line parameter, such as -o Evaluate.weight_path=./output/best_model/best_model.pdparams.

After completing the model evaluation, an evaluate_result.json file will be generated, which records the evaluation results, specifically whether the evaluation task was completed successfully and the model's evaluation metrics, including AP.

4.4 Model Inference and Integration¶

After completing model training and evaluation, you can use the trained model weights for inference predictions or Python integration.

4.4.1 Model Inference¶

-

To perform inference predictions through the command line, use the following command. Before running the following code, please download the demo image to your local machine.

Similar to model training and evaluation, the following steps are required: -

Specify the

.yamlconfiguration file path for the model (here it isPicoDet-S.yaml) - Specify the mode as model inference prediction:

-o Global.mode=predict - Specify the model weights path:

-o Predict.model_dir="./output/best_model/inference" - Specify the input data path:

-o Predict.input="..."Other related parameters can be set by modifying theGlobalandPredictfields in the.yamlconfiguration file. For details, refer to PaddleX Common Model Configuration File Parameter Description.

4.4.2 Model Integration¶

The model can be directly integrated into the PaddleX pipelines or directly into your own project.

1.Pipeline Integration

The object detection module can be integrated into the General Object Detection Pipeline of PaddleX. Simply replace the model path to update the object detection module of the relevant pipeline. In pipeline integration, you can use high-performance inference and serving deployment to deploy your model.

2.Module Integration

The weights you produce can be directly integrated into the object detection module. Refer to the Python example code in Quick Integration, and simply replace the model with the path to your trained model.

You can also use the PaddleX high-performance inference plugin to optimize the inference process of your model and further improve efficiency. For detailed procedures, please refer to the PaddleX High-Performance Inference Guide.