Sever Deployment

OCR Pipeline WebService¶

PaddleOCR provides two service deployment methods:

- Based on PaddleHub Serving: Code path is "

./deploy/hubserving". Please refer to the tutorial - Based on PaddleServing: Code path is "

./deploy/pdserving". Please follow this tutorial.

Service deployment based on PaddleServing¶

This document will introduce how to use the PaddleServing to deploy the PPOCR dynamic graph model as a pipeline online service.

Some Key Features of Paddle Serving:

- Integrate with Paddle training pipeline seamlessly, most paddle models can be deployed with one line command.

- Industrial serving features supported, such as models management, online loading, online A/B testing etc.

- Highly concurrent and efficient communication between clients and servers supported.

PaddleServing supports deployment in multiple languages. In this example, two deployment methods, python pipeline and C++, are provided. The comparison between the two is as follows:

| Language | Speed | Secondary development | Do you need to compile |

|---|---|---|---|

| C++ | fast | Slightly difficult | Single model prediction does not need to be compiled, multi-model concatenation needs to be compiled |

| python | general | easy | single-model/multi-model no compilation required |

The introduction and tutorial of Paddle Serving service deployment framework reference document.

Environmental preparation¶

PaddleOCR operating environment and Paddle Serving operating environment are needed.

-

Please prepare PaddleOCR operating environment reference link. Download the corresponding paddlepaddle whl package according to the environment, it is recommended to install version 2.2.2.

-

The steps of PaddleServing operating environment prepare are as follows:

note: If you want to install the latest version of PaddleServing, refer to link.

Model conversion¶

When using PaddleServing for service deployment, you need to convert the saved inference model into a serving model that is easy to deploy.

Firstly, download the inference model of PPOCR

Then, you can use installed paddle_serving_client tool to convert inference model to mobile model.

After the detection model is converted, there will be additional folders of ppocr_det_v3_serving and ppocr_det_v3_client in the current folder, with the following format:

The recognition model is the same.

Paddle Serving pipeline deployment¶

-

Download the PaddleOCR code, if you have already downloaded it, you can skip this step.

The pdserver directory contains the code to start the pipeline service and send prediction requests, including:

-

Run the following command to start the service.

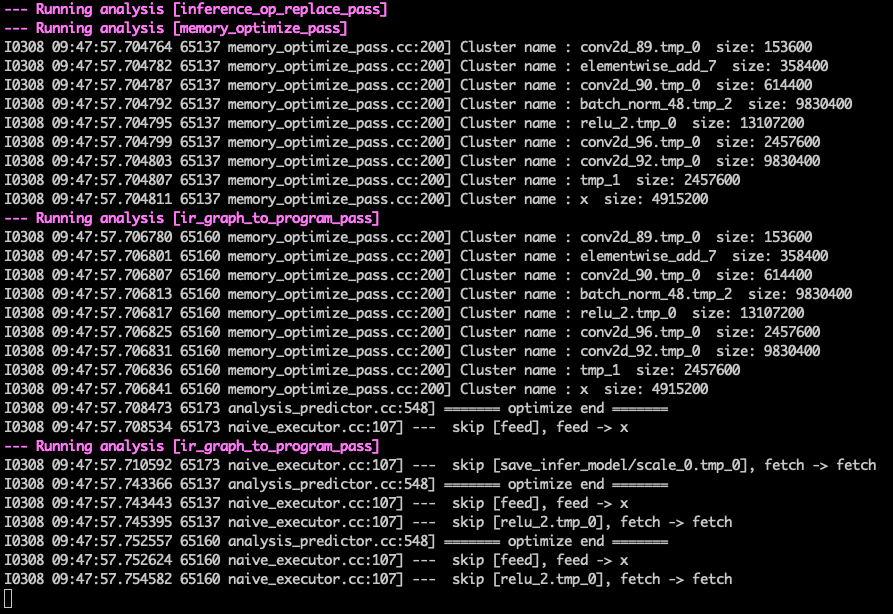

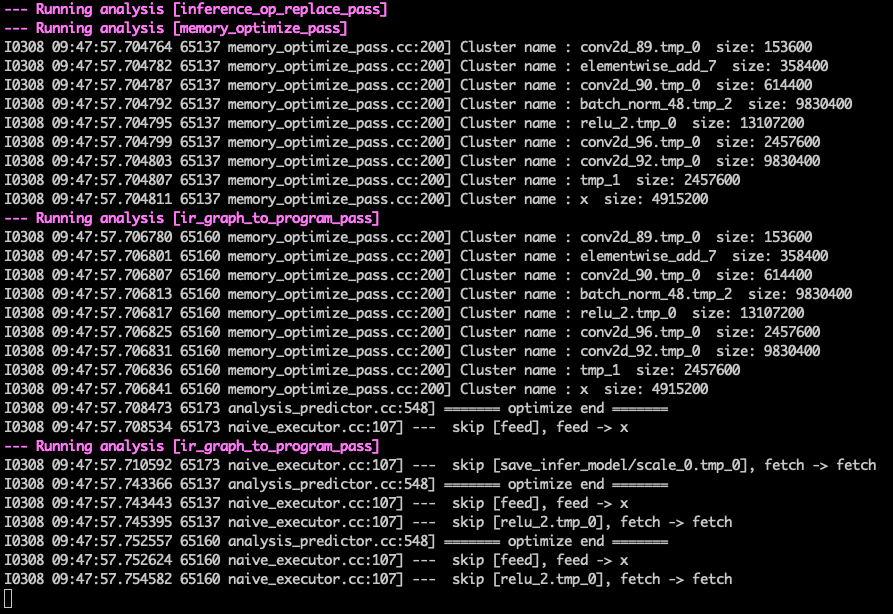

After the service is successfully started, a log similar to the following will be printed in log.txt

-

Send service request

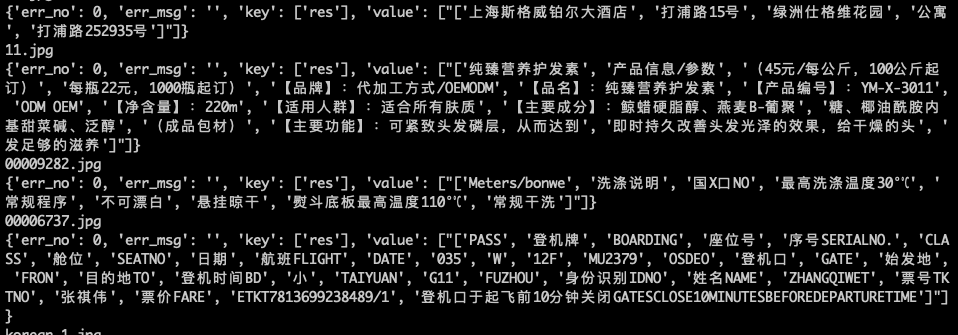

After successfully running, the predicted result of the model will be printed in the cmd window. An example of the result is:

Adjust the number of concurrency in config.yml to get the largest QPS. Generally, the number of concurrent detection and recognition is 2:1

Multiple service requests can be sent at the same time if necessary.

The predicted performance data will be automatically written into the

PipelineServingLogs/pipeline.tracerfile.Tested on 200 real pictures, and limited the detection long side to 960. The average QPS on T4 GPU can reach around 23:

C++ Serving¶

Service deployment based on python obviously has the advantage of convenient secondary development. However, the real application often needs to pursue better performance. PaddleServing also provides a more performant C++ deployment version.

The C++ service deployment is the same as python in the environment setup and data preparation stages, the difference is when the service is started and the client sends requests.

- Compile Serving

To improve predictive performance, C++ services also provide multiple model concatenation services. Unlike Python Pipeline services, multiple model concatenation requires the pre - and post-model processing code to be written on the server side, so local recompilation is required to generate serving. Specific may refer to the official document: how to compile Serving

-

Run the following command to start the service.

After the service is successfully started, a log similar to the following will be printed in log.txt

-

Send service request

Due to the need for pre and post-processing in the C++Server part, in order to speed up the input to the C++Server is only the base64 encoded string of the picture, it needs to be manually modified Change the feed_type field and shape field in ppocr_det_v3_client/serving_client_conf.prototxt to the following:

start the client:

```bash linenums="1"

python3 ocr_cpp_client.py ppocr_det_v3_client ppocr_rec_v3_client

```

After successfully running, the predicted result of the model will be printed in the cmd window. An example of the result is:

WINDOWS Users¶

Windows does not support Pipeline Serving, if we want to lauch paddle serving on Windows, we should use Web Service, for more infomation please refer to Paddle Serving for Windows Users

WINDOWS user can only use version 0.5.0 CPU Mode

Prepare Stage:

-

Start Server

-

Client Send Requests

FAQ¶

Q1: No result return after sending the request.

A1: Do not set the proxy when starting the service and sending the request. You can close the proxy before starting the service and before sending the request. The command to close the proxy is: